Integrating AI to Reduce Training Drop-off

A data-driven approach to solving silent churn in B2B VR training

Visit TAP3DAI reduced training drop-off

Short on time?

Get the quick highlights in a visual, swipeable format.

Users Were Dropping Off — But Why?

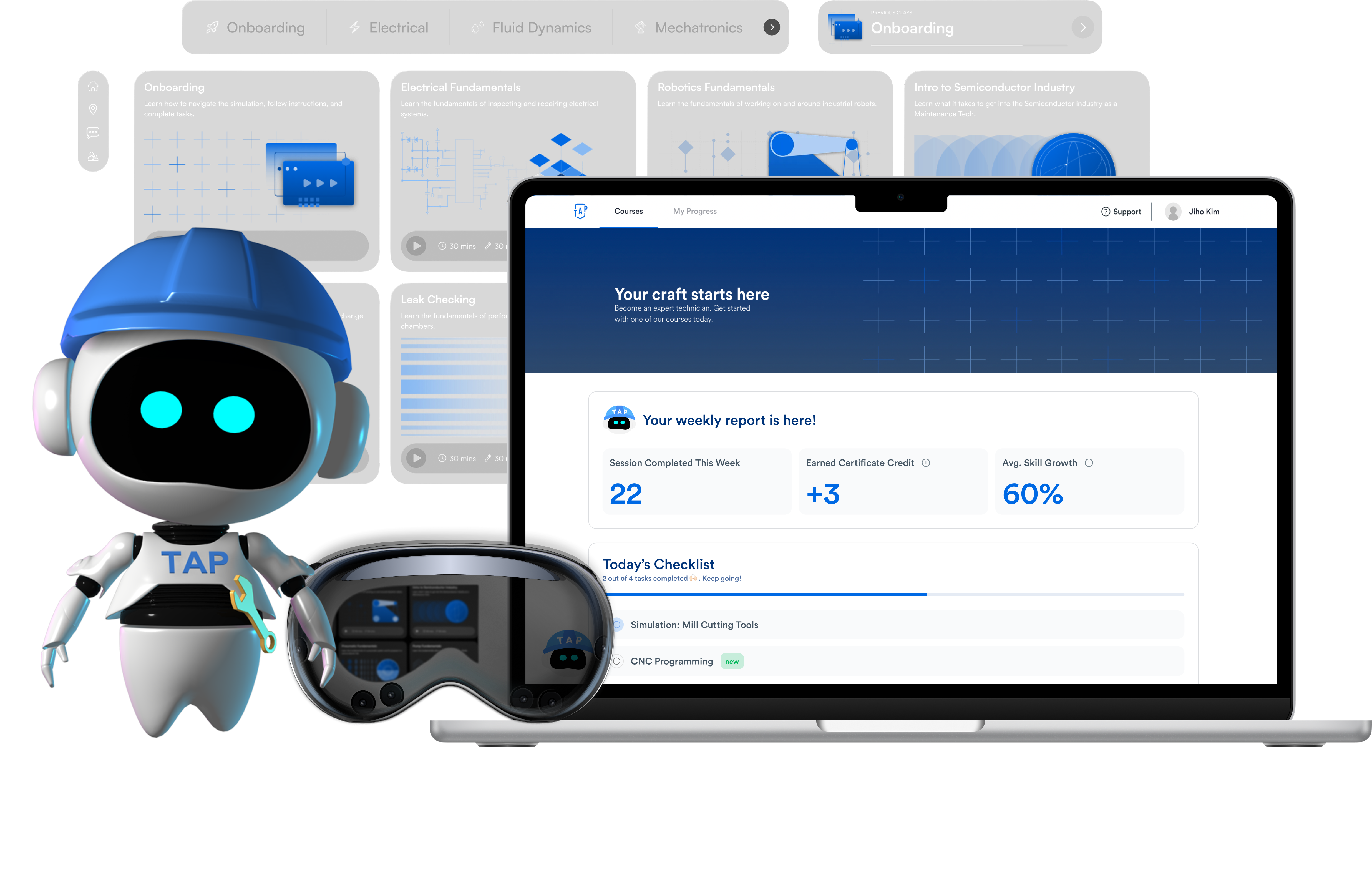

TAP3D is a B2B VR training platform preparing workers for advanced manufacturing roles, offering a seamless experience between VR and Web.

Despite a seamless platform, internal data revealed a significant lag in the training completion cycle. Users were struggling to complete training and move to the review stage, directly impacting customer retention metrics.

Usage logs showed a clear pattern — learners were dropping off early, unable to keep pace with initial modules. The quantitative data showed where they dropped off, but not why.

9 Interviews. 3 Barriers.

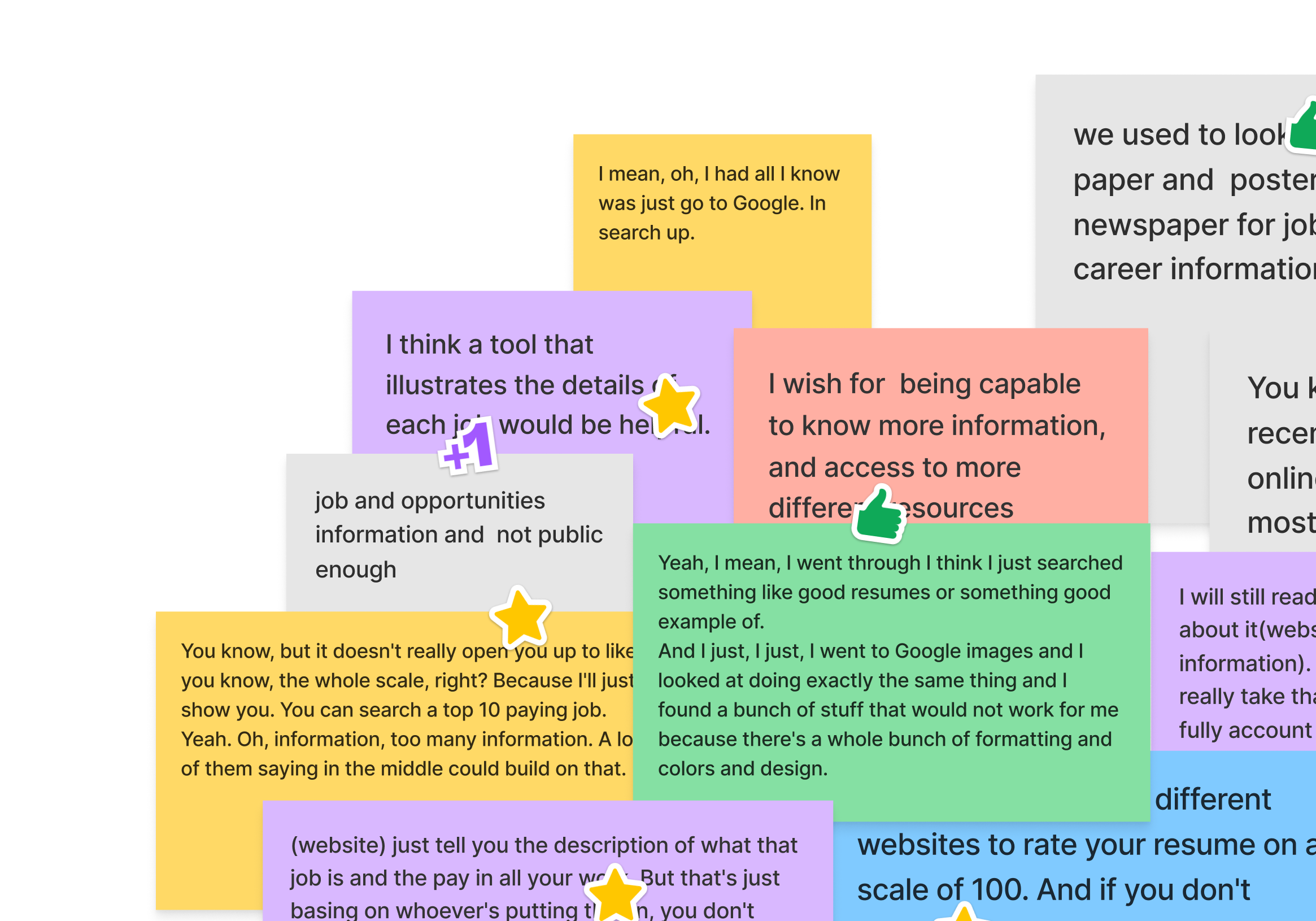

To uncover the root cause, I conducted 9 user interviews and performed affinity mapping on 200+ coded quotes to identify recurring themes.

My research centered on two key questions: What triggers a user to start right now? and What is the minimum information needed to commit?

Research confirmed three critical barriers that explained the drop-off:

Ship Fast, Prove More Later

I evaluated three options to address these goals, considering development cost and user value.

Low Feasibility

High Feasibility

Low Feasibility

High Feasibility

The AI Agent clearly won on impact, but engineering was constrained — they were finalizing AI inside the VR sessions. Latency and token costs were concerns.

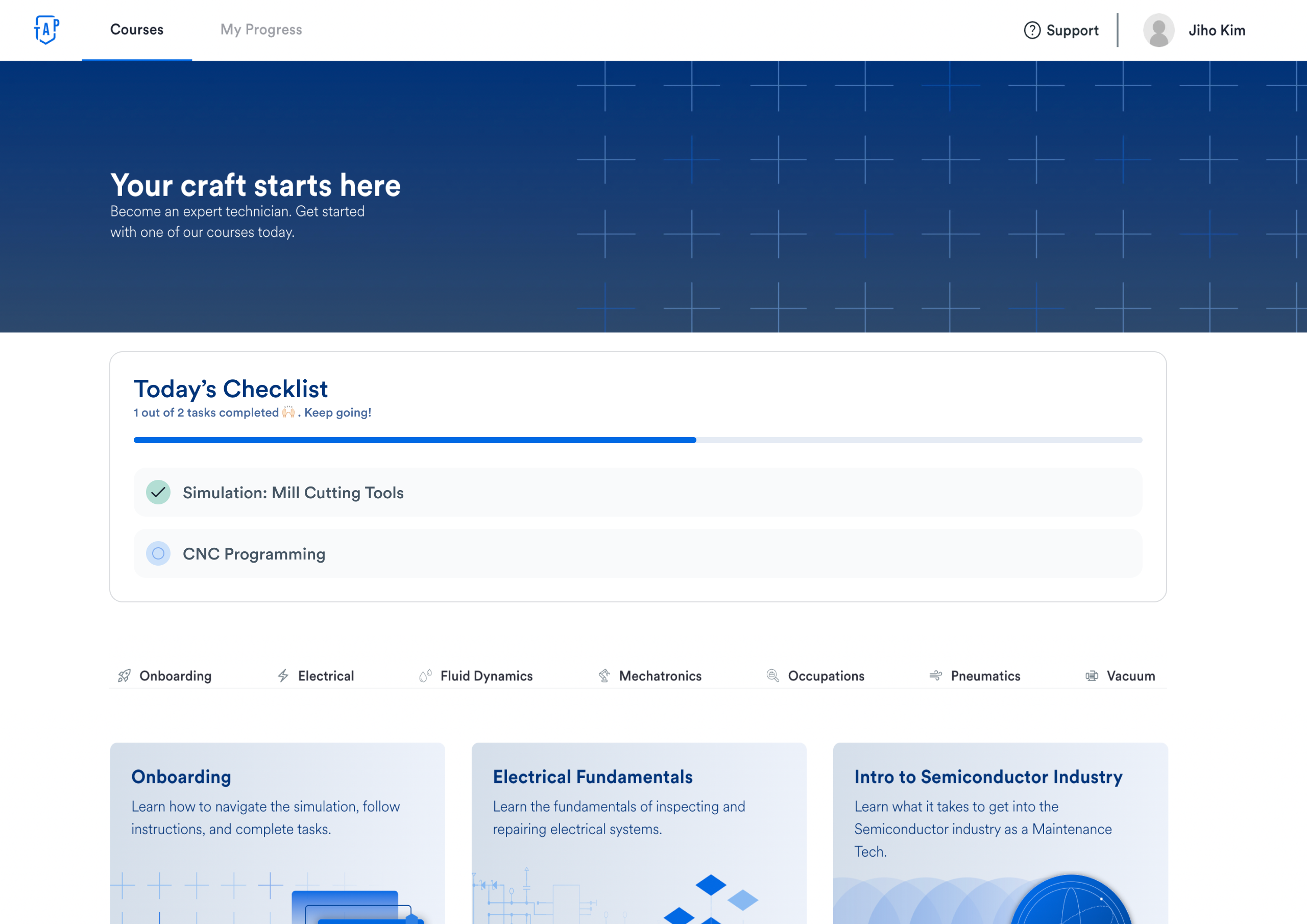

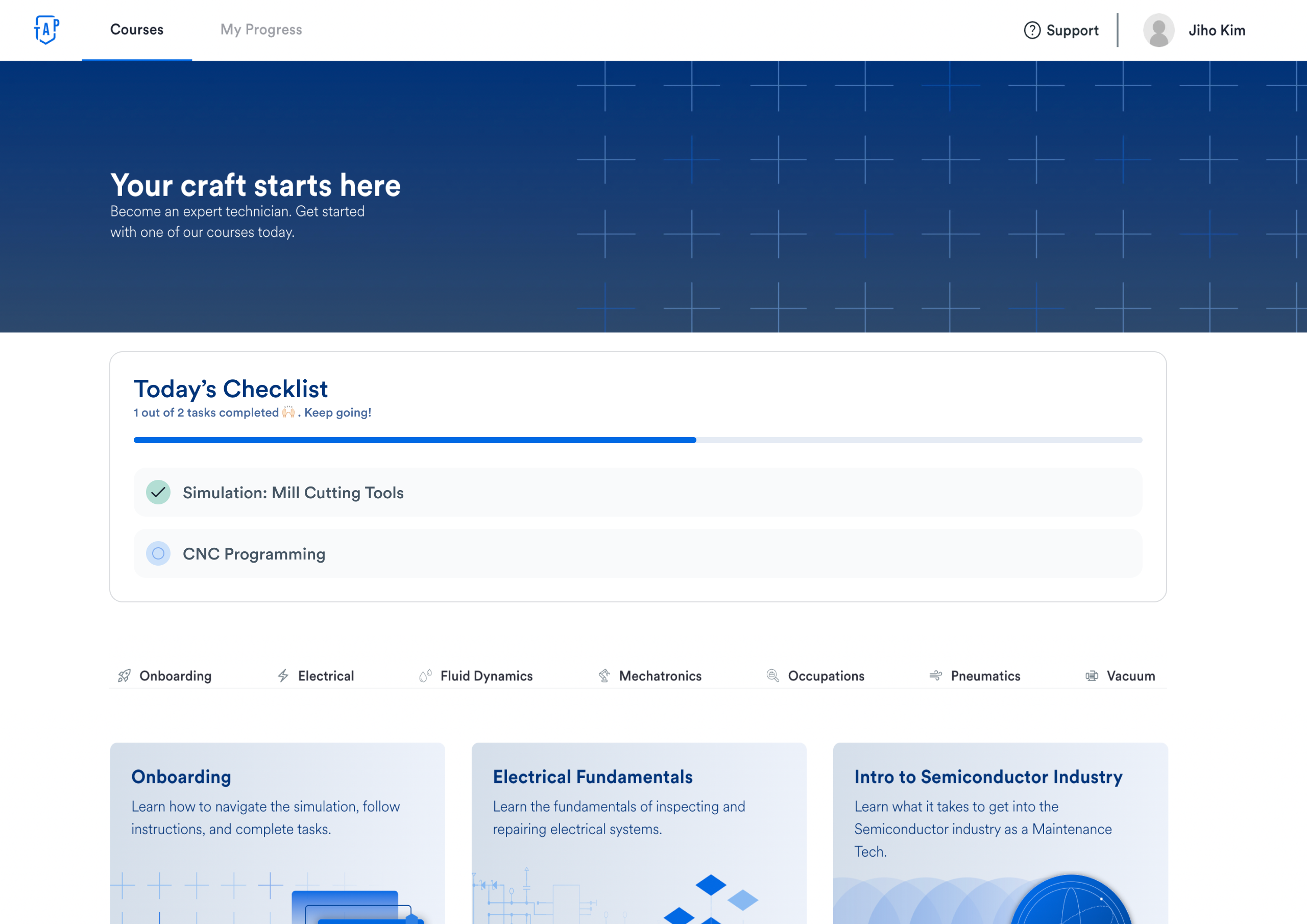

Checklist as MVP to deliver immediate value. I designed a "Checklist Card" pinned to the top of the training page to clarify tasks and reduce cognitive load.

Simulating AI to Prove ROI

To justify the investment and convince skeptical stakeholders, I led a Wizard of Oz simulation with 24 users — I acted as the AI backend in real-time.

Learners upload a short web/VR recording; the system auto-drafts a message with context for the instructor.

AI recommends a 5–10 minute action with a one-line rationale.

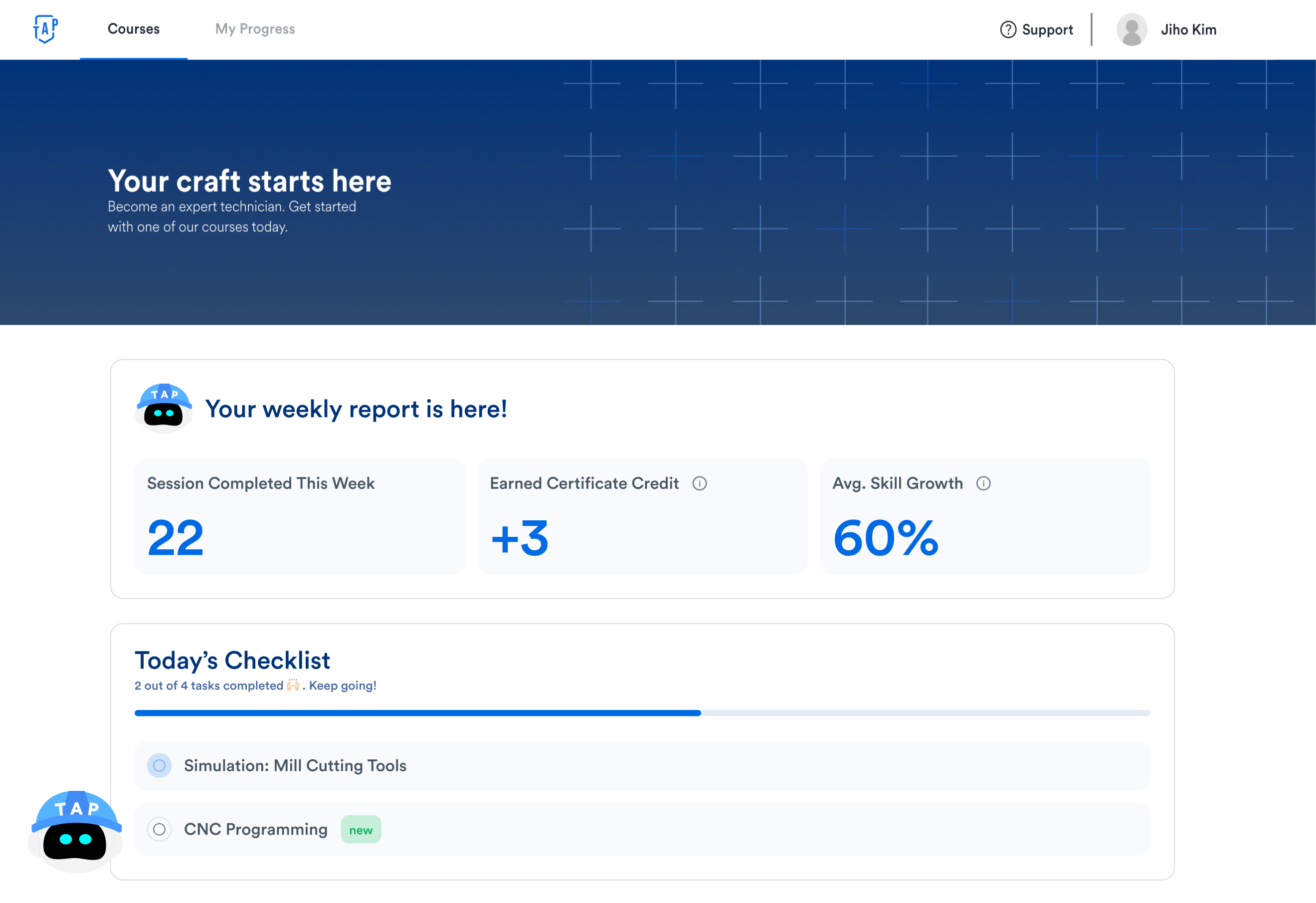

AI summarizes weekly/monthly progress and proposes the immediate next step to sustain motivation.

The CFO Said Yes

I presented a side-by-side comparison (AI Agent screens vs. MVP screens) to Engineering, the PM, and the CFO.

The CFO was initially worried about ROI and development costs. The specific conversion metrics from the simulation test provided concrete evidence. We decided to roll out the AI Agent immediately after the VR AI integration wrapped.

The AI Agent is now live across the training flow. I designed the AI widget as a modular component to ensure consistency across the design system.

I pitched that a consistent AI experience creates job-ready talent efficiently, and that localizing for workforce-demand markets is key to scale. The CFO agreed, and we are now progressing with Chinese localization.

One Designer, Six Partners

As the sole designer on a 6-person cross-functional team, I had to balance speed with alignment. Collaboration wasn't a phase — it was embedded in every step of the process.

Defining the Work Scope & Boundaries

As the sole designer, establishing clear ownership with the Product Manager was critical to moving fast and shipping the MVP.

What I'd Carry Forward

Pre-launch simulation testing wasn't common at TAP3D. This project demonstrated its value to the C-suite, helping seed a culture of data-driven design.

This project taught me to navigate constraints and make strategic trade-offs. I realized I want to design services that scale globally and solve complex ecosystem problems — leveraging cross-disciplinary collaboration and large-scale problem solving.