Designing Emotional Continuity for Gen Z

Most wellness apps speak. Few listen. We built one that does.

View on App Storeempathy-driven design broke the 72-hour wall

Short on time?

Get the quick highlights in a visual, swipeable format.

Why We Built Lepal.ai

Existing wellness solutions offered productivity hacks but lacked emotional continuity. Our target users — Gen Z — needed empathy, not efficiency.

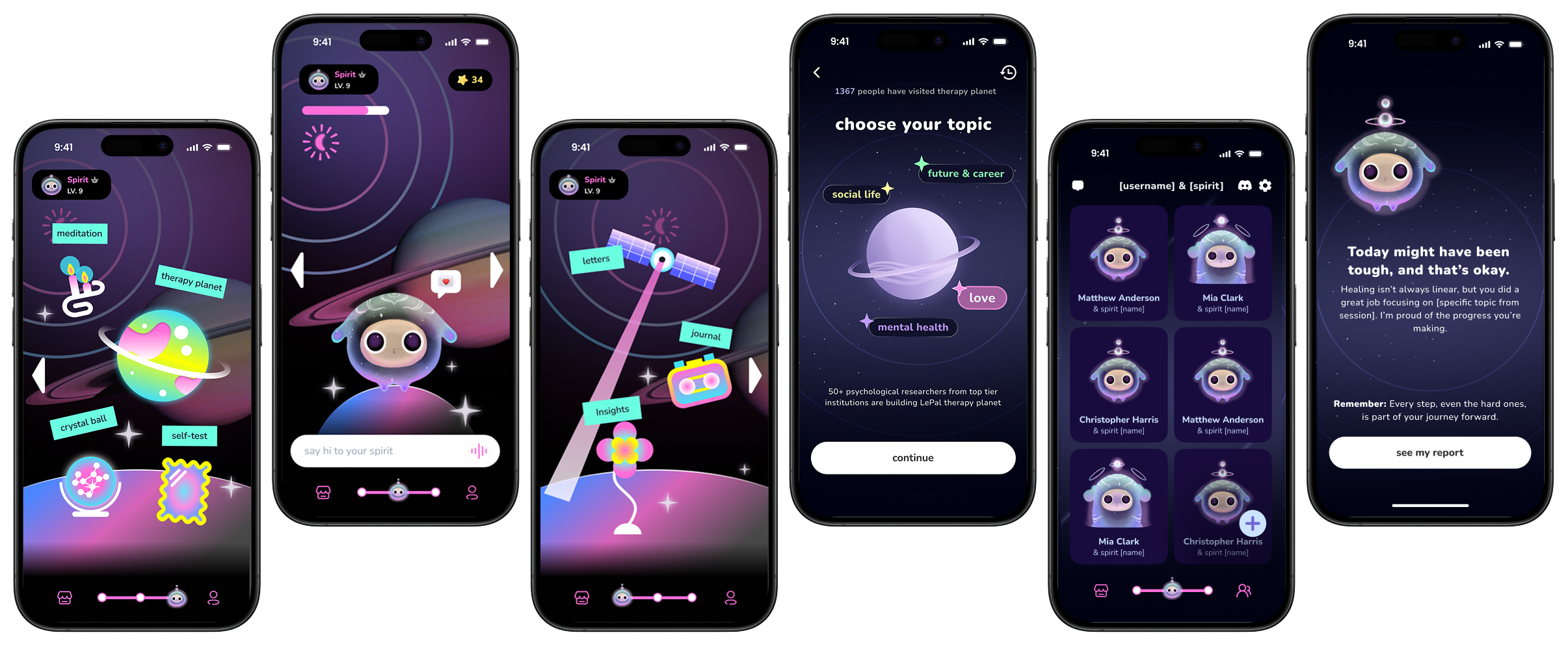

As Lead Designer, I owned the end-to-end design for 3 core experience pillars:

While I designed the entire ecosystem, the biggest challenge lay in "My Journal," where we needed the AI to feel like a supportive companion, not a robot.

The "72-Hour Wall"

Despite a promising beta, data showed strong Day-1 interest but rapid disengagement around the 72-hour mark.

Backend wasn't persisting context, causing disjointed conversations.

The AI remembered facts but failed to read feelings. Users didn't feel heard.

Validating the Hypothesis

To find the "why," I conducted a mixed-method study: 5 semi-structured interviews with Gen Z graduate students and a 3-day mood diary study.

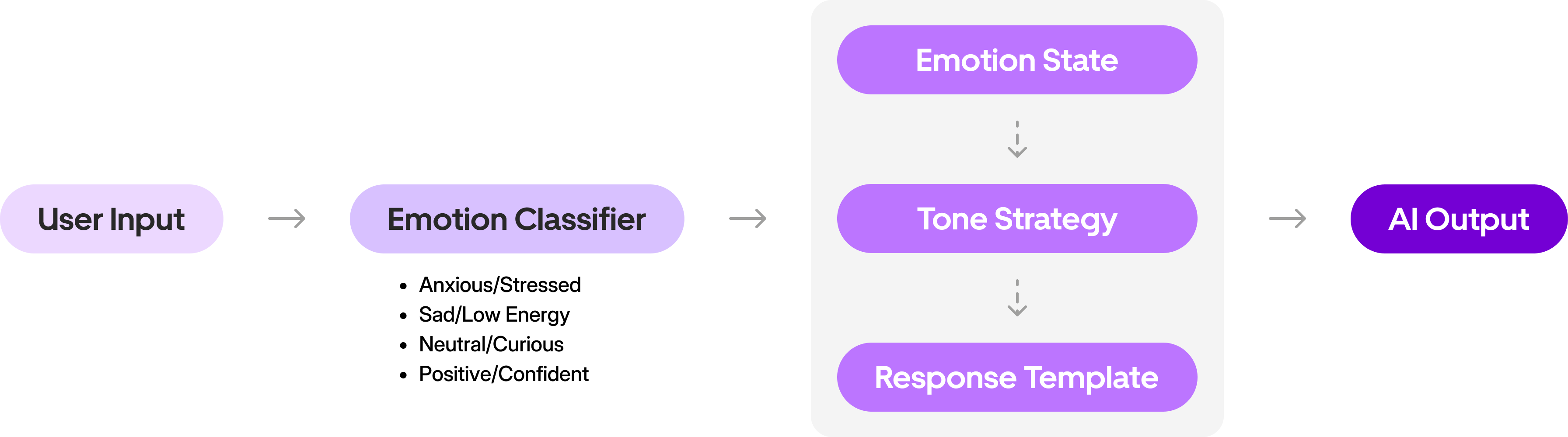

Adaptive Tone Framework

I reframed the goal from "Fixing memory" to "Prioritizing empathy before solutions."

I collaborated with ML engineers and PM to build an Emotion-Aware AI Framework. Instead of one-size-fits-all, the system now intercepts user input to "read the room."

Rather than relying on basic keyword matching, I collaborated with ML engineers to map user inputs to 4 core emotional states using LLM-based context analysis.

Designing "My Journal"

Once we established the Tone Framework, I had to translate that logic into the interface. I led the redesign of the "My Journal" screen, focusing on two pivotal decisions to make the AI feel less like a machine and more like a companion.

Even with an empathetic tone, the AI's instant responses felt robotic. We needed a visual cue that implied "processing" and "care," not just "loading."

Clear system status, easy to build.

Mimics human behavior.

I implemented animated typing dots with variable latency. This small visual shift changed the user's perception from "The app is downloading a reply" to "A companion is thinking about what I said," significantly boosting the feeling of authenticity.

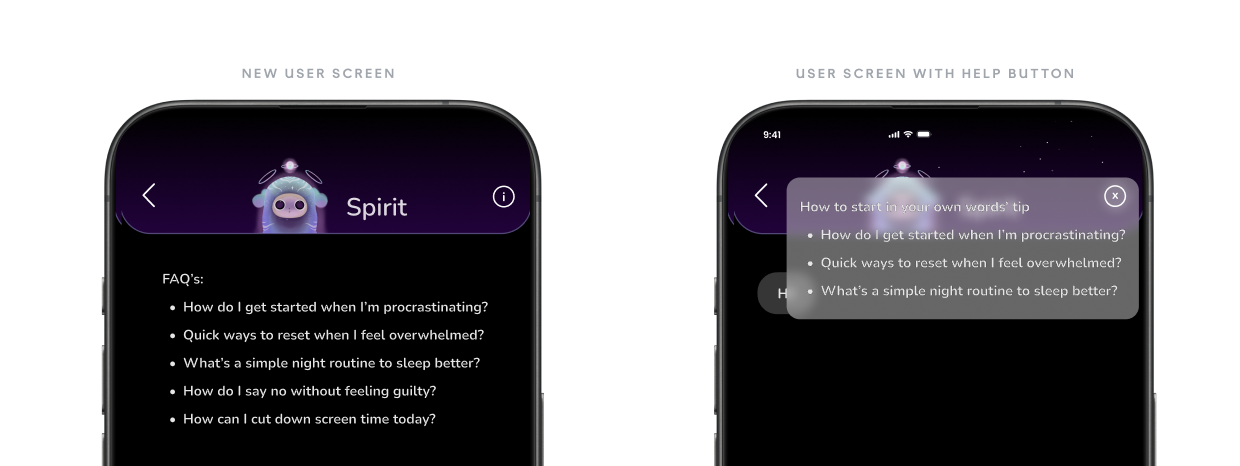

Users often froze when facing an empty chat box. We needed to guide them without compromising the quality of the emotional data we were collecting.

Display FAQ guidance text permanently above the chat.

A clean input field.

I designed a middle ground that satisfies both needs — reducing friction for new users while preserving data quality for the Tone Framework.

Turning Conflict into Co-Creation

The biggest challenge wasn't the pixels — it was aligning the team on why we were building this way.

While intentional delay builds trust, excessive lag frustrates users. I established a maximum latency threshold of 3 seconds and ran a pre-launch comparison test.

The Tone Framework became the team's shared language — a single artifact where every discipline had ownership of a piece. This is what turned conflict into co-creation.

"I didn't see it as a UX problem until she reframed latency as listening. That changed how I thought about the entire architecture."

"The 'Training Wheels' metaphor made it click for me. We didn't have to choose between retention and data quality — we could have both."

"Having clear tone rules and empathy markers made my job easier. I wasn't guessing anymore — I was writing with a system."

From Productivity Tool to Emotional Companion

To validate the Tone Framework, we ran an A/B test comparing the original "Productivity-driven" tone (Control) against our new "Emotion-Aware" framework (Variant).

What I Learned

Good product design is about designing relationships.

This project taught me that the best AI products don't just process language — they understand context, respect emotion, and create space for human vulnerability.